The topic of I revived my old Android phone by turning it into a better voice assistant than any… is currently the subject of lively debate — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

Aside from its solid compatibility with smart devices, Home Assistant has several tricks up its sleeve. Its trigger-action automation workflows are simple enough for beginners, while packing enough depth to keep tinkerers like yours truly hooked for a long time. Then there’s community blueprints, official (and third-party) integrations, and add-ons (or apps, as they’re called in recent releases).

The best part? Home Assistant receives new updates every month, with each release adding more quality-of-life tweaks and cool features to tinker with. While the continue on error automation action and energy dashboard improvements are pretty neat additions to HASS, the native wake on word detection for the (Android-based) companion app is hands-down the best feature of the update. That’s because it turned my old smartphone into a Home Assistant satellite for querying (and controlling) my smart devices using just my voice (and my self-hosted LLMs).

Before I begin, let me add that it was technically possible to use an Android device to control Home Assistant even before update 2026.3 came into the picture. Unfortunately, the process was quite cumbersome, and you’d often have to configure multiple apps – both on your Home Assistant hub and an Android phone – just to use the latter as a satellite. However, the March 2026 update added native wake word detection facility to the Home Assistant Companion app, allowing your Android device to identify specific phrases and activate the built-in HASS assistant upon hearing them without creating a convoluted chain of apps and integrations.

Home Assistant’s hot word detection facility is powered by the TensorFlow-powered microWakeWord, which is so light that it can even create models for mere microcontrollers. So, all the voice processing occurs on the smartphone instead of relying on some external cloud, and that’s what made me excited about this facility. I’ve got an old Poco M6 Pro that tends to slow down if I open too many browser tabs, but the microWakeWord detection works instantaneously on this outdated budget-friendly smartphone.

The only caveat of this facility is that it supports three wake words and doesn’t allow you to configure your own hot words, unlike other third-party satellite projects. But considering the long process for community satellites, I don’t mind saying “Okay Nabu” (even though I really wish I could yell “Wakey-wakey, smart homie” or “HQ respond”) to access my voice assistant.

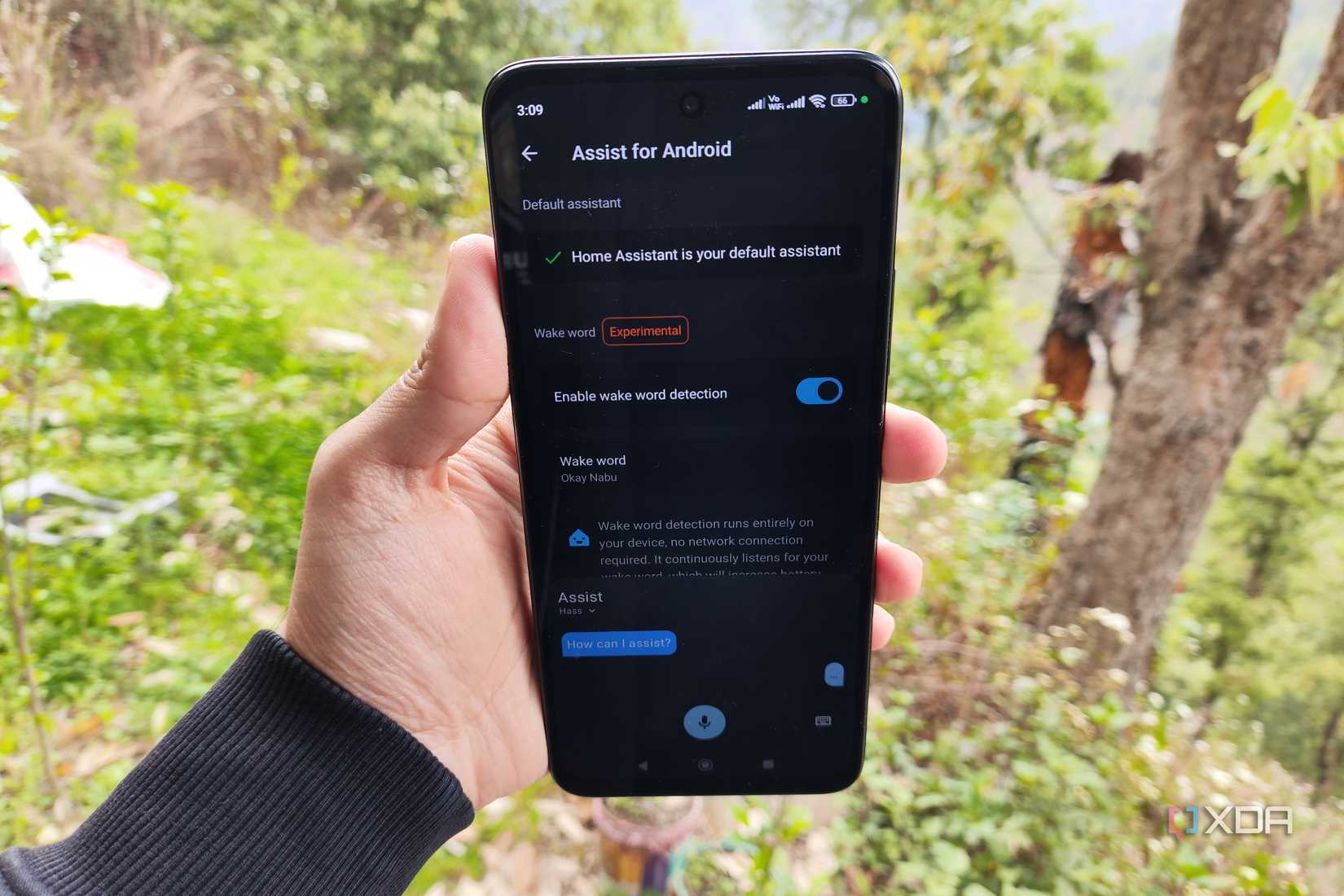

Assuming you’ve already installed the Home Assistant Companion Android app and paired it with your server, the satellite functionality doesn’t require a lot of legwork. First, you’ll want to set Home Assistant as the default Assist device. Then, you’ll want to grant Home Assistant access to your device’s microphone, though I had to remove battery optimizations and grant it permission to show up on the lock screen to get the hot words detected.

Finally, you can check the Enable wake word detection toggle inside the Assist for Android section of the Companion App tab inside Settings, and choose the word combo that works best for you. Keep in mind that Home Assistant will actively use the microphone, which can drain your Android device’s battery pretty fast if it’s as old as the one I’m using. The official HASS blog post recommends setting automations to deactivate wake words when you’re away from your home, but you can also configure trigger-action rules to turn this feature off at night.

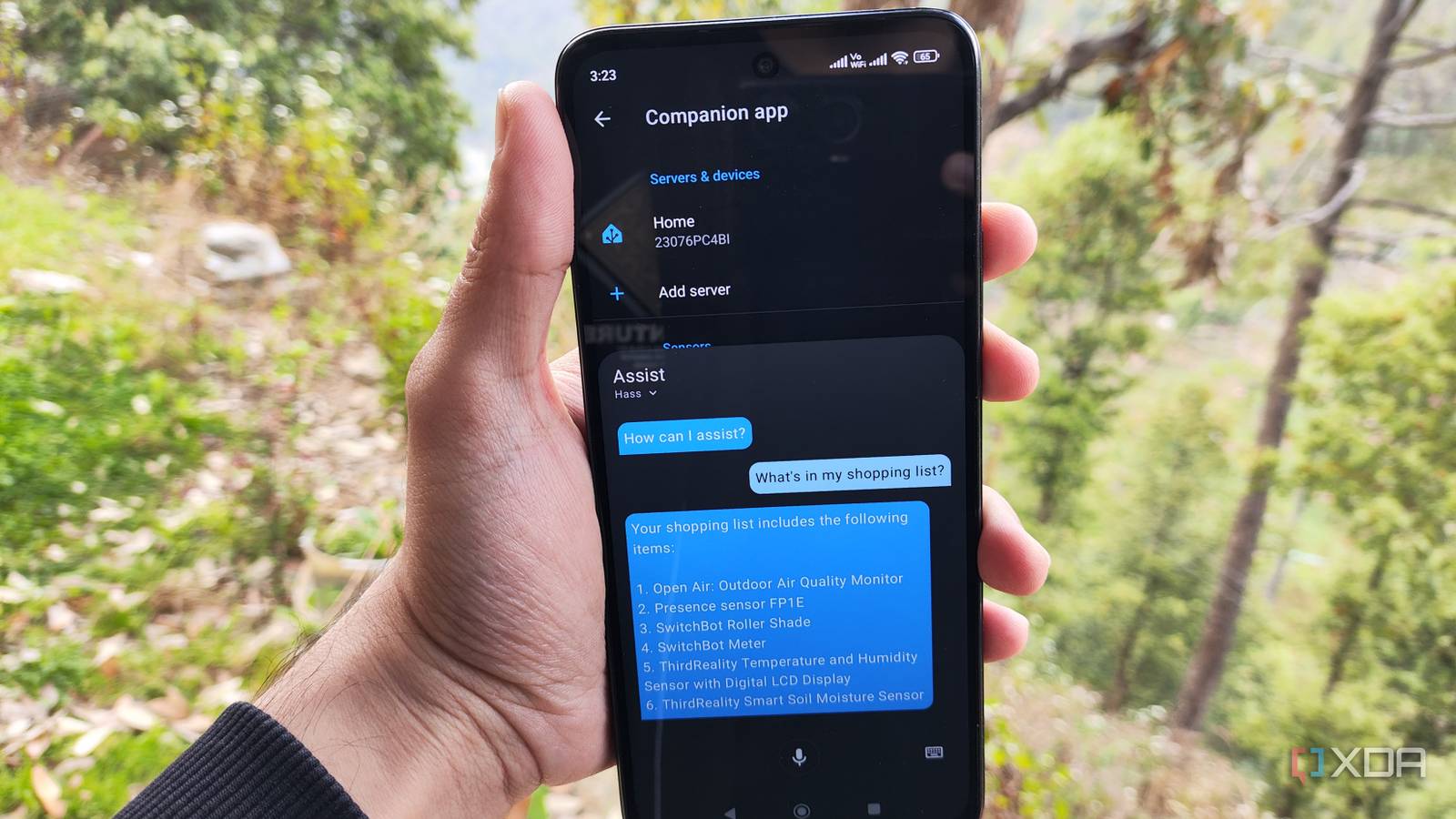

Either way, if you performed everything correctly, the built-in assistant should pop up after you say the wake word. Without additional tweaks, you can only chat with it via texts, which reduces the utility of a voice-activated wake word. But that’s where custom voice pipelines come into the equation…

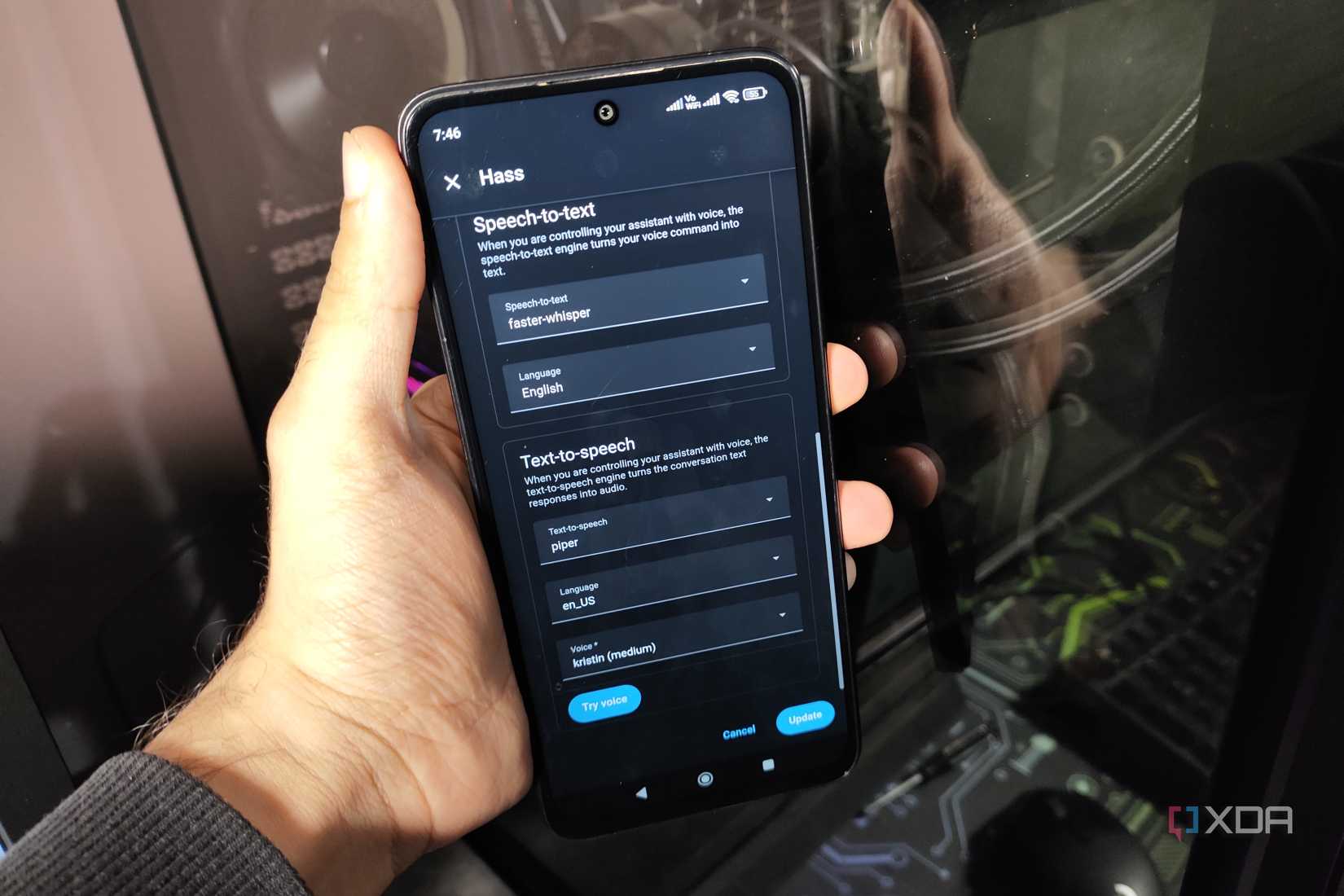

As a Home Assistant tinkerer with a couple of years under my belt, I’ve already set up my own assistants inside the smart home platform. I’ve used the Ollama integration on HASS to use my Qwen3 (8B) model as the conversation agent, as it delivers the most consistent results without taxing the GTX 1080 powering it. I tried using the Speech-to-Phrase tool from the App Store, but Whisper (or rather, faster-whisper) performs better as the speech-to-text utility. Finally, Piper serves as the text-to-speech agent and converts the LLM responses into audio messages. I’ve been using this trio with my smartphone-based Assist server for the past week, and it works without any latency whatsoever.

Why it matters

News like this often changes audience expectations and competitors’ plans.

When one player makes a move, others usually react — it is worth reading the event in context.

What to look out for next

The full picture will become clear in time, but the headline already shows the dynamics of the industry.

Further statements and user reactions will add to the story.