The topic of Finding meaning in text, an experiment in document clustering is currently the subject of lively debate — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

For an assignment in the University of British Columbia’s CPSC330 course in Applied Machine Learning, we were tasked with categorizing titles pulled from a sample of Food.com recipes. The goal was simple, use a subset of the banks 180,000+ recipes to find categories of recipes purely based off their titles. Achieving said goal was the real challenge, with so many different considerations made in the modeling process due to the nature of the data – text.

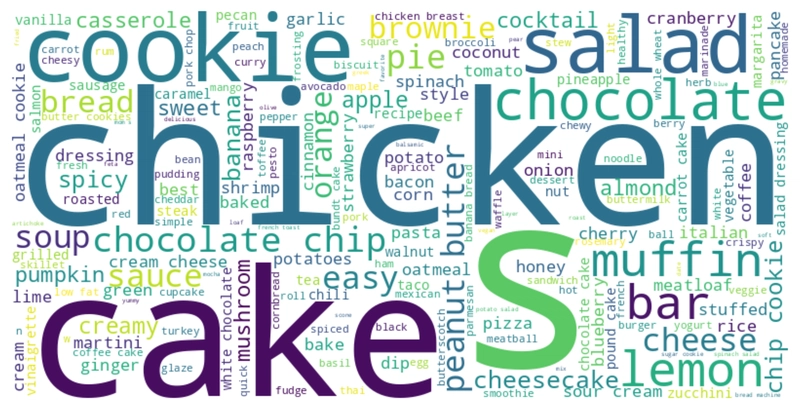

From our sample of recipes, we pulled a smaller subset of data consisting 9100 words. We did this by removing duplicate entries, NaNs, short names (< 5 characters), and only selecting observations with tags that were amongst the top 300 tags in our sample. Below we can see what this unprocessed data of title names looks like, and a visualization of the words within the dataset.

We found that the shortest name in our subsample was “bread”, and the longest was “baked tomatoes with a parmesan cheese crust and balsamic drizzle”. The most commonly occurring words included those like “chicken” and “cake”. You can refer to the wordcloud visualization above to get a better picture of the data we were working with.

Now we need to decide how best to represent these recipe names as features for our model.

As we are trying to go from a sample of words to sensible categories of those words, we needed a way to accurately represent said text to our models. In class we learnt one of the go-to methods for exploratory encoding of text was using a CountVectorizer and Bag-of-words encoding to count the word counts.

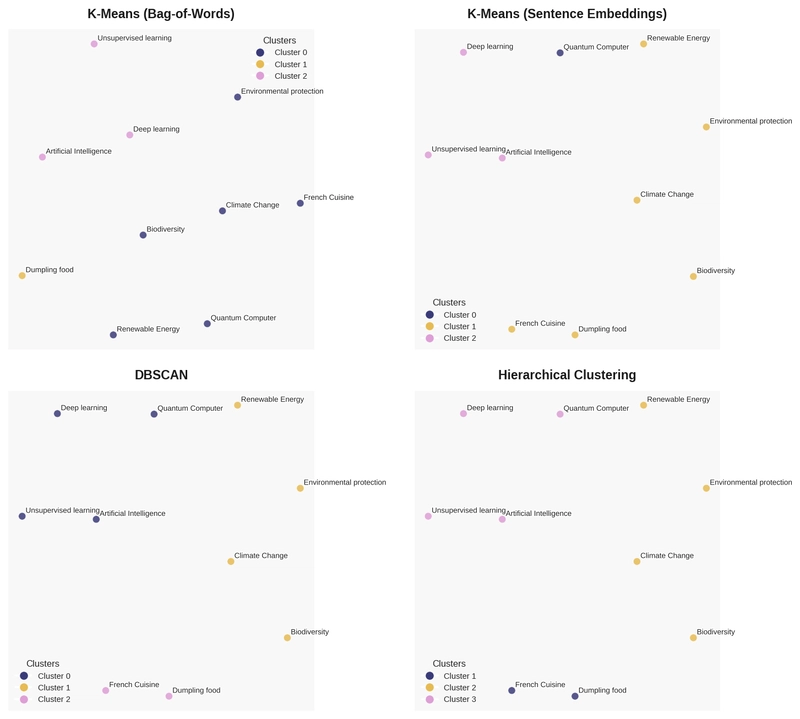

Using a CountVectorizer with Bag-of-words encoding is intentionally shallow. We do not capture any meaning of the words, just their frequency and pass this on to our model. We lose all the nuances that word meaning affords us (the humans), but at the same time we have a feature set that the model can interpret and make connections from, even if rudimentary at best. From our testing on Wikipedia data (the toy corpus we tried models on at the start of hw6!), we can see that Bag-of-words encoding does present clusters for us but they do not scream sensible right away. for example the queries: “Quantum Computer”, “Environmental protection”, “Renewable Energy”, and “Climate Change” were merged as one cluster by this model.

If bag-of-words encoding does not do the job, then what might? We explored using sentence embedding representations next. Using a pre-trained text embedding model ‘all-MiniLM-L6-v2’ from the Sentence Transformers package we can convert the text into vector representations that might be significantly more detail rich in the eyes of our model. By using a pre-trained model we essentially get the advantage of a model that already knows the “meaning” behind the words it is looking like and it can attach relevant weights that will help our model find patterns in the words to effectively cluster from. To get an idea of what this vector array looks like, take a look at the table below which is a sample of our Wikipedia data after encoding.

Using these sentence embeddings with k-means clustering as before, we see a marked improvement in our clusters. The clusters make more immediate sense, unlike before the queries: “Quantum Computer”, “Environmental protection”, “Renewable Energy”, and “Climate Change” are no longer one cluster! The model smartens up and excludes “Quantum Computer” from the grouping, instead opting to include that query with others such as “Unsupervised learning” and “Deep learning”.

Now that we have elected to use sentence embeddings for our text encoding, we consider our method of clustering itself. While we have been using k-means clustering from the beginning of this project, there are still countless other methods we can consider that we learned over the duration of CPSC 330. For time saving I will gloss over the model specifics, but we tested our embeddings with using DBSCAN with cosine distances (as opposed to k-means which uses Euclidian distances) and hierarchial clustering. In the plot below you can see the clusters that were identified by each model.

In the end I selected hierarchical clustering as my preferred clusering method as it presented the most sensible results in my opinion.

Now that we have selected our method of encoding data and our clustering method, we can go ahead and start work on the recipes! After fitting and training our models we can take a look at what clusters each one produces. There is a general consensus between the models to categorize types of food, whether it be hard, liquid, sweet, or spicy. Below we see the clusters that each model managed to identify.

DBSCAN seems to be a very face-value model, with some weird clusters like the “chex mex” one. Hierarchical and k-means both do a decent job at separating the foods into different categories, but from my visual inspection it seems like the results from the hierarchical model are more “sensible” in that they match my intuition for what the clusters should look like.

All models have some sort of “abstract” name cluster, and all have at least one sweet cluster. The results from k-means show some redundant clustering and also further confirm my preference towards the hierarchical model.

Given the cluster results and my interpretations of them, hierarchical clustering wins for interpretability. But if I’m being honest, the real lesson here is that representation matters more than algorithm choice – all three embedding-based methods broadly agreed on what the clusters should look like, while bag-of-words failed regardless of which algorithm we threw at it.

That said, this approach is not without its quirks. Recipe names don’t always reflect their content; take “California roll salad” for example – it lands with salads, not Japanese food. This makes sense when you think about it, the model is just reading the words and “salad” is right there in the name. It has no way of knowing what a California roll actually is or what cuisine it belongs to, it just sees the word “salad” and groups accordingly.

This ties into a broader limitation with our embedding model. While ‘all-MiniLM-L6-v2’ is a powerful pre-trained model, it was trained on general English text rather than food-specific language. It understands that “chicken” and “beef” are related, sure, but it might not pick up on the nuances between something like “braised” and “stewed” the way a food-specific model might. A model fine-tuned on recipe or culinary data could potentially produce even tighter, more meaningful clusters.

Finally, we should acknowledge that our sample itself is doing some heavy lifting here. By filtering down to only recipes with tags in the top 300, we are inherently making our data more well-behaved. The clusters we see look pretty clean, but that’s at least in part because we’ve already removed a lot of the noise and edge cases that would exist in the full 180,000+ recipe dataset. On a messier, more complete sample, our models would likely struggle more and the clusters would not look nearly as tidy.

Are you sure you want to hide this comment? It will become hidden in your post, but will still be visible via the comment’s permalink.

For further actions, you may consider blocking this person and/or reporting abuse

DEV Community — A space to discuss and keep up software development and manage your software career

Built on Forem — the open source software that powers DEV and other inclusive communities.

Why it matters

News like this often changes audience expectations and competitors’ plans.

When one player makes a move, others usually react — it is worth reading the event in context.

What to look out for next

The full picture will become clear in time, but the headline already shows the dynamics of the industry.

Further statements and user reactions will add to the story.