The topic of I connected my local LLM to Home Assistant through MCP, and now my smart home… is currently the subject of lively debate — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

I’ve started pairing my FOSS applications with self-hosted LLMs running on my Ollama and LM Studio instances, and AI-powered workflows have made my life a lot smoother. Whether it’s automatically creating tags for my bookmarked blogs, extracting text from images and PDFs, or helping me troubleshoot annoying terminal outputs, LLMs are great at tackling cumbersome tasks.

And that’s just the apps that natively support LLM integrations. Once I started setting up MCP servers, I was able to integrate LLMs with everything from my NAS OS to my Nextcloud instance. Home Assistant is the latest member of my MCP-powered arsenal, and although it took me a while to find the right combo of LLMs, clients, and MCP proxy services, it meshes exceedingly well with my local models.

Technically, Home Assistant includes native integrations for Ollama and other local providers, and that’s how I managed to configure a voice-controlled HASS pipeline that listens to my queries and responds accordingly. But an MCP server has its own utility in my Home Assistant hub, as it bridges my smart home setup with practically any client app that can use LLM-based prompts – including LM Studio and Claude Code.

Considering the sheer number of community packages available on Home Assistant, it shouldn’t come as a surprise that there are dozens of MCP Servers for the smart home platform, each with its own set of tools. I initially wanted to use the official Home Assistant Model Context Protocol Server integration, but I encountered some issues when pairing the mcp-proxy tool with the LM Studio instance running on my Windows machine. For reference, it’s the same PC housing my RTX 3080 Ti and the LLMs powered by it.

So, I went with the unofficial HA-MCP Server repository instead. Now, I’m always cautious about connecting something as vital as my smart home platform with a community repo, but after going through the code and looking at its popularity in the HASS community, the HA-MCP Server doesn’t trigger any red flags in my book. Plus, it supports a ton of handy admin tools for Home Assistant, and doesn’t require extra integrations or proxy services to hook my LM Studio models to my HASS instance.

Unlike other MCP servers I’ve set up in the past, deploying HA-MCP was a cakewalk, especially thanks to its neat step-by-step installation process. First, I created a long-lived token on my Home Assistant system. This involved heading to the Security tab within my HASS Profile and hitting the Create token button under the Long-lived tokens section. Since Home Assistant only displays it once, I copied this token to my Vaultwarden instance just to be safe.

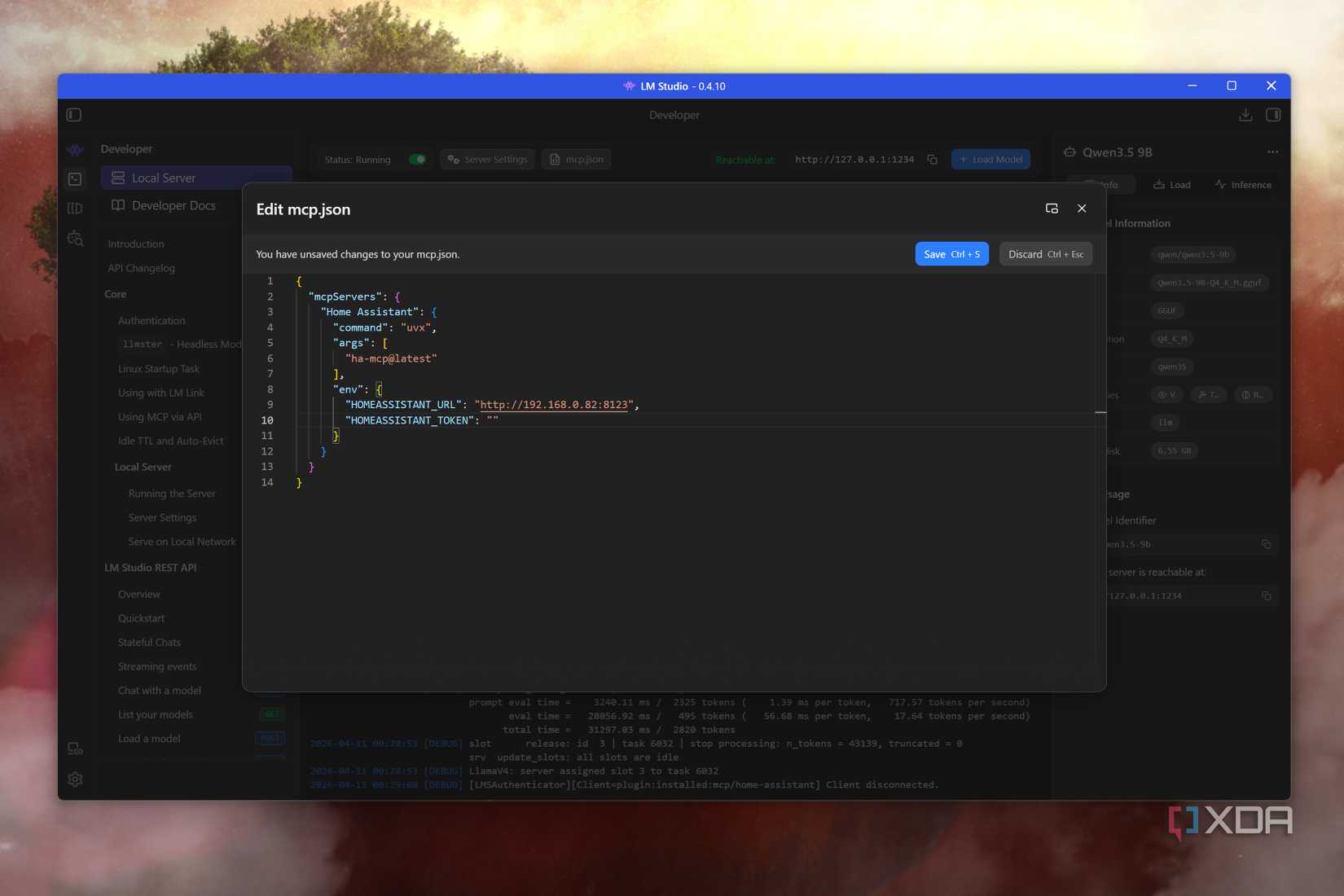

Then, I ran the winget install astral-sh.uv command in the Terminal (PowerShell, of course) on my Windows 11 PC. But since I use LM Studio, I pasted this code into the mcp.json file to connect the app to the MCP server, and by extension, my Home Assistant instance:

Remember to follow the JSON indentation rules when copying this code into your LM Studio instance.

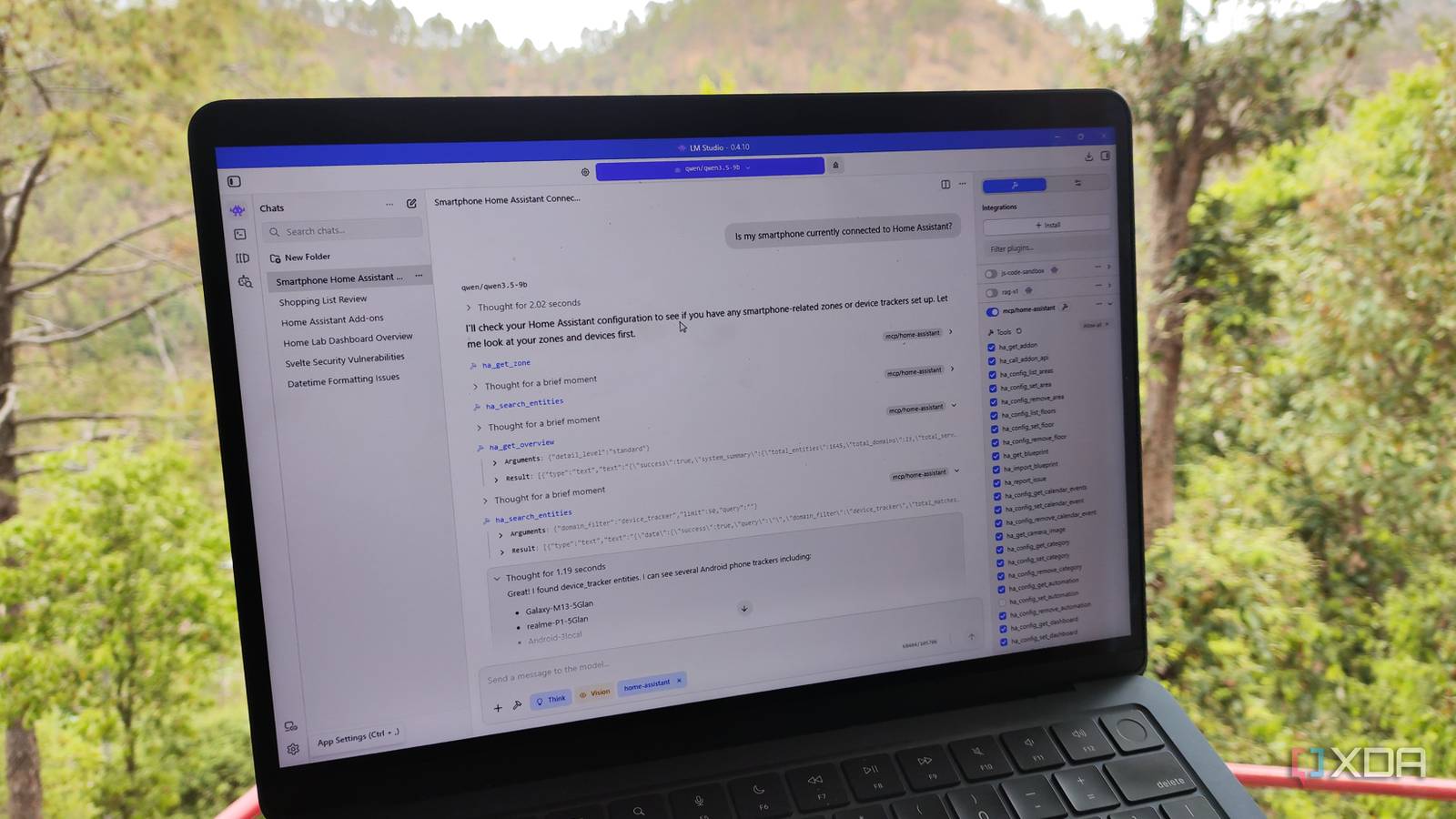

Once I’d saved the file and loaded my LLMs, the extensive tools within HA-MCP became visible under the integration section of LM Studio, and it was time to start experimenting.

I initially tried to control Home Assistant using some low-parameters models, but they left a lot to be desired. The DeepSeek R1 Distil Qwen 1.7B model, for instance, would sprout complete nonsense, even though it was able to access my Home Assistant hub via the MCP server. It’d even hallucinate the names of my entities, apps, and integrations – to the point where it even made new ones on the spot. Gemma 3 (4B) was better, but it would often fail to access the MCP tools, forcing me to rerun my prompts. Considering that HA-MCP includes tools that can add entities and manage entire integrations, I wasn’t very confident in using weak LLMs, especially after witnessing their tragic attempts at answering simple questions.

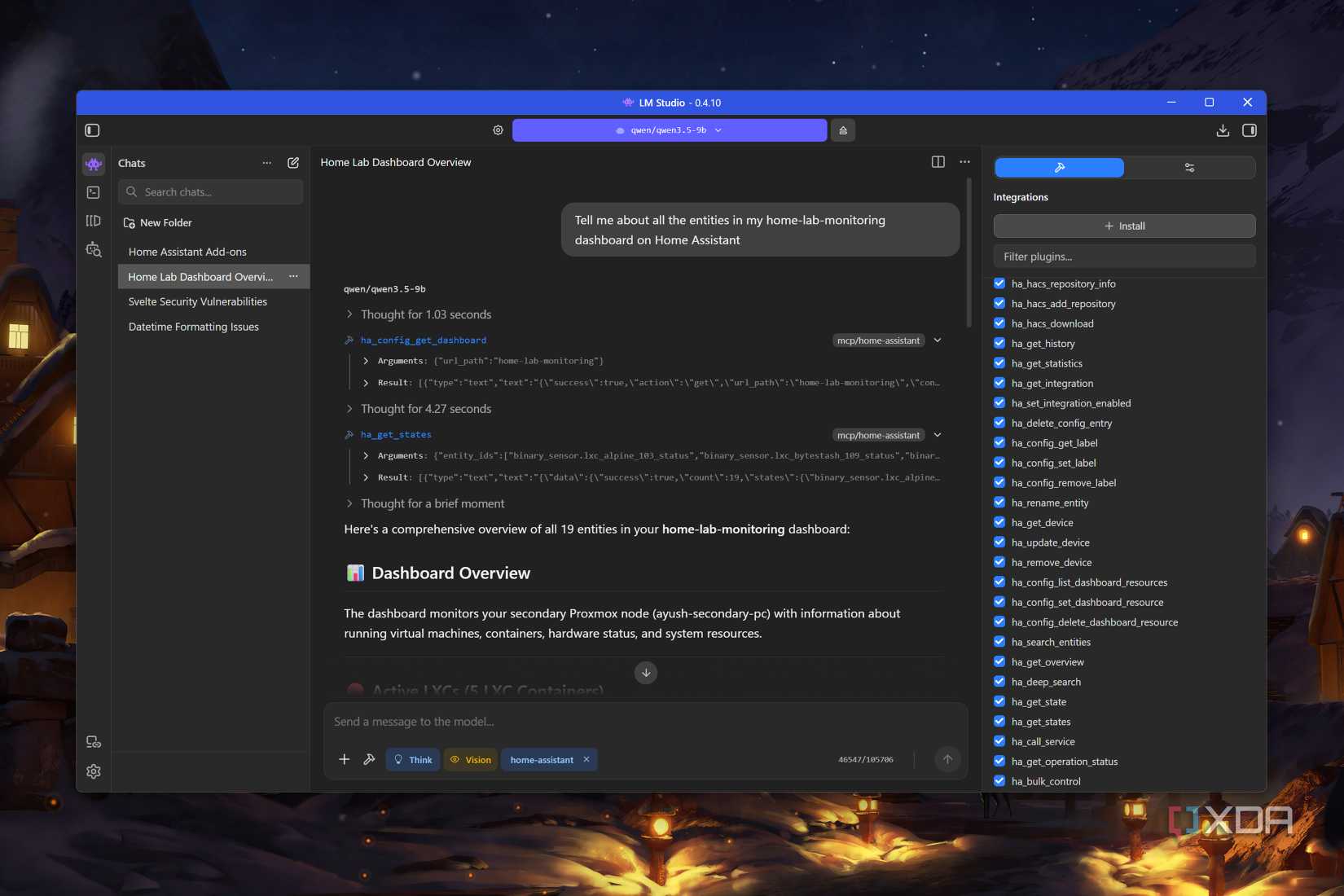

That’s when I tried using Qwen 3.5 (9B). Although this bulky model and its massive context length consume a lot of VRAM, it was really consistent in its results. for example, I queried it about the entities in my custom dashboards, and this LLM was able to generate detailed (accurate) reports on my devices. HA-MCP Server lets my LLMs access different areas, apps, integrations, and even HACS offerings, and Qwen 3.5 was able to deliver precise answers every time. Plus, I was able to trigger a bunch of HASS automations, modify scripts, and update integrations from LM Studio, so there’s a lot of utility in this project.

That said, I’ve disabled a couple of tools with high-level access, namely those pertaining to backup restoration, reloading HASS Core, updating services, writing files, and triggering scripts. On paper, letting my LLMs control my smart devices sounds neat, but I’d rather not deal with a vague prompt rendering my entire Home Assistant useless.

Why it matters

News like this often changes audience expectations and competitors’ plans.

When one player makes a move, others usually react — it is worth reading the event in context.

What to look out for next

The full picture will become clear in time, but the headline already shows the dynamics of the industry.

Further statements and user reactions will add to the story.