The topic of Your Latency Problem Isn’t Model Size (It’s Your Routing) is currently the subject of lively debate — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

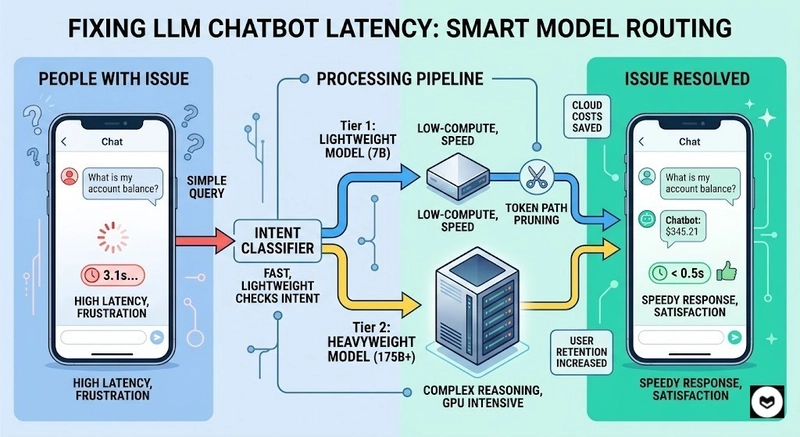

We spent months chasing latency. Bigger GPUs, smaller batch sizes, every optimization trick in the book. Yet, our chatbot still crawled at 3s+ per response. While our throughput dashboards looked green, our users were staring at blank loading states.

We assumed the model was the bottleneck. It wasn’t. The real culprit was routing every request regardless of complexity through the same heavyweight model.

Instead of one model for everything, we implemented a tiered inference architecture. The logic is simple: Classify intent, then match compute to need.

We used MegaLLM to integrate this routing logic without rebuilding our entire inference pipeline. The integration took a weekend; the results were game-changing.

Most AI latency problems are architectural, not infrastructural. Before you upgrade your GPU specs or obsess over CUDA kernels, look at your request distribution.

Stop burning GPU cycles on trivial queries. If you’re looking for tools to help with this, MegaLLM is a solid example of a platform that handles tiered inference without the headache of a custom-built stack.

Disclosure: This article references MegaLLM (https://megallm.io) as one example platform.

Are you sure you want to hide this comment? It will become hidden in your post, but will still be visible via the comment’s permalink.

For further actions, you may consider blocking this person and/or reporting abuse

DEV Community — A general discussion space for the Forem community. If it doesn’t have a home elsewhere, it belongs here

Built on Forem — the open source software that powers DEV and other inclusive communities.

Why it matters

News like this often changes audience expectations and competitors’ plans.

When one player makes a move, others usually react — it is worth reading the event in context.

What to look out for next

The full picture will become clear in time, but the headline already shows the dynamics of the industry.

Further statements and user reactions will add to the story.