The topic of The Orange Pi Zero 3W beats the Raspberry Pi 5 on paper, but it can’t use half… is currently the subject of lively debate — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

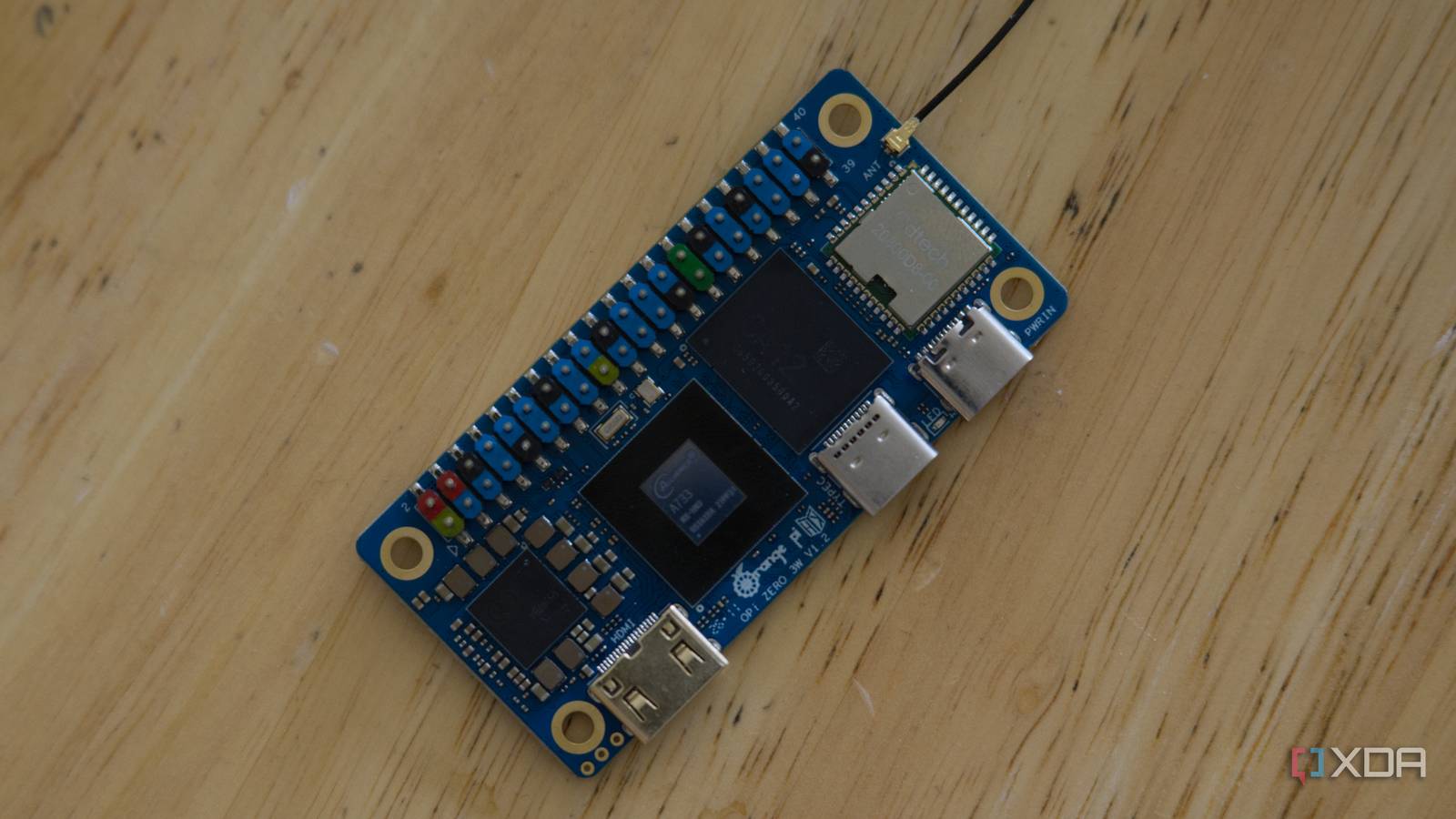

The Raspberry Pi 5 is still the default answer when somebody wants a small Linux board, and for good reason. However, there’s a much smaller, much cheaper board that beats it on several of the specs enthusiasts care about most: the newly released Orange Pi Zero 3W. It’s roughly the same physical size as a Raspberry Pi Zero, runs an octa-core Allwinner A733 with up to 16GB of LPDDR5, has a Vulkan-capable PowerVR GPU and a 3 TOPS NPU on board, and starts at $25 for the 1GB model.

Out of the box, two of those headline features do nothing. The GPU is idle, the video engine is idle, and Orange Pi’s official image rasterizes 3D in software and decodes video on the CPU. Half of what makes the chip interesting is sitting on the die doing nothing. I spent some time getting the silicon to actually do its job, and the result is an image that enables the major advertised accelerator blocks I cared about: Vulkan, OpenCL, hardware video decode/encode, and the NPU.

There are still a couple of gaps to fill, but after a few hours of tinkering, this board is in a much more usable state than it was previously.

The Allwinner A733 is an octa-core SoC, packing two big Cortex-A76 cores up to 2.0 GHz paired with six smaller A55 efficiency cores. It’s the same A76 architecture as the Raspberry Pi 5, which runs them at 2.4 GHz. The Pi 5 should still win many single-threaded tasks as a result, but the A733 has a real advantage in mixed or heavily parallel workloads that can use the extra A55 cores.

After that, the gap widens. The OPi Zero 3W has LPDDR5 memory where the Pi 5 still uses LPDDR4X, WiFi 6 and Bluetooth 5.4 against the Pi 5’s WiFi 5 and Bluetooth 5.0, and onboard eMMC up to 32GB or UFS 3.0 storage up to 128GB as an option, where the Pi 5 boots from a microSD card by default. It has USB-C with DisplayPort 1.4 alt mode, two MIPI CSI connectors, a MIPI DSI connector, and a 4K-capable mini HDMI output. The Pi 5 has stronger mainstream display support through dual 4Kp60 micro-HDMI and two flexible MIPI camera/display transceivers, but it doesn’t have USB-C DisplayPort alt mode or the same compact Zero-sized connector mix.

The two specs that really matter are the GPU and the NPU. The A733 has an Imagination PowerVR B-Series BXM-4-64 MC1 with full Vulkan 1.3 support, and a VeriSilicon AIPU rated at 3 TOPS for INT8 inference. The Pi 5 has a VideoCore VII GPU with its own Vulkan support and no NPU at all. If you’re doing anything with on-device ML, that alone makes the OPi significantly more compelling.

The price is also a big deal. You’ll pay $25 for the 1GB model, $50 for 4GB, $80 for 8GB, or $99.90 for 12GB, with 16GB pricing yet to be announced. The Pi 5’s 8GB model is more expensive than the 12GB OPi, and you don’t get the NPU, LPDDR5, or WiFi 6, and you’re locked to four cores.

Plug an SD card with Orange Pi’s official Ubuntu image (these and the Debian images are the only ones available right now) into the board and boot it, and most of the chip works. The CPU does the usual CPU things. Wi-Fi connects, Bluetooth pairs, and HDMI works. Run anything that needs the GPU, though, and you’ll find it isn’t being used. Open a 3D-heavy webpage and Chromium falls back to software rasterization. Try to play a 1080p video and the player decodes it on the CPU cores at full utilization, because the video engine doesn’t work either.

Here’s the problem: while the silicon is physically present and the kernel has the driver loaded for the VPU, there’s no loaded GPU driver and no userspace stack in the official images I examined. The PowerVR GPU’s kernel module isn’t loaded at all, and there are no Vulkan, OpenGL ES, or OpenCL libraries. The video engine has its kernel driver loaded and exposes its character device under /dev, but nothing in userspace can use it. They’re effectively bricked from the perspective of any program that wants to use them.

So why doesn’t Orange Pi just ship the drivers? It’s hard to tell. These libraries are proprietary blobs that ship under their own EULAs, and redistribution may depend on vendor-specific agreements or packaging decisions. Radxa makes a board called the Cubie A7S that uses the same Allwinner A733 chip, and the video stack appears to work on their image, with a usable PowerVR userspace stack, working Vulkan, and a functional BSP video path.

Orange Pi doesn’t ship those Allwinner BSP blobs in its Linux images. If I were to guess, assuming a license is involved, I’d wager Orange Pi doesn’t have the same agreement Radxa does. That’s merely an inference, though, and not confirmed. What is certain is that on the OPi side, there’s a kernel perfectly happy to drive the silicon and multiple Ubuntu and Debian images with nothing in userspace that can talk to it. So I decided to merge the two.

Before I get into what actually worked, I first tried taking Radxa’s image, which has the GPU and video engine working natively, and bolting OPi’s bootloader onto it. Same chip, so why not? Unfortunately, the chip wasn’t the problem; everything around it was. The OPi’s WiFi is SDIO-attached, but Radxa’s Cubie A7S uses the AIC8800 chip on USB, so Radxa’s prebuilt Wi-Fi modules couldn’t probe the bus the OPi uses, and OPi’s prebuilt SDIO modules wouldn’t load on Radxa’s kernel. Radxa’s image also expects an interactive first-boot wizard to create the user account, and that wizard doesn’t run on a serial-only headless boot, so all accounts in /etc/shadow were locked from the start. The bootloader format was different, console naming was too, and plenty more had to be manually patched. I diagnosed most of it over a serial-to-USB adapter, and it eventually booted after a lot of back and forth of flashing, debugging, fixing, and flashing again. Wi-Fi was still dead and the rootfs was a mess. A couple of hours in, I decided on a new plan.

That plan turned out to be straightforward enough, and I wish I’d started with it. I took Orange Pi’s image as the base, because that’s the one that already gets boot, Wi-Fi, default user, and the Wi-Fi driver stack right. Then I borrowed only the bits the OPi image is missing from Radxa’s image: the proprietary userspace and the kernel module source for the GPU.

For the GPU, Radxa ships the kernel module as portable source with Linux version code checks up to 6.8, so DKMS rebuilds against OPi’s kernel cleanly, even going from Radxa’s 5.15 image to Orange Pi’s 6.6. The userspace libraries ship as ordinary Debian packages with file lists, so it’s mostly a matter of walking the file list and copying the contents into the OPi rootfs at the same paths. Same trick for the video engine. After I copied everything over and reflashed my SD card, the OPi suddenly had a working OMX implementation, a registered GStreamer plugin, and the codec libraries to back them up.

There were a couple of fiddly bits along the way. One header file is missing from OPi’s kernel-headers package (bsp/include/sunxi-sid.h) even though the kernel config asks for it, so I had to grab that from Radxa’s image for the GPU rebuild. Radxa’s GStreamer config also only had three of the ten compatibility flags the plugin supports turned on, and rewriting it to enable all ten was required to get video decode actually working.

The hardware was set at this point, and with everything working over serial and the OPi connected to Wi-Fi, I thought I was ready to call the project complete. Then I plugged it into my monitor and discovered a problem I hadn’t experienced on the stock image: Xorg auto-bound to the wrong DRM device, specifically the PowerVR render-only node instead of the sunxi-drm node that actually has the HDMI connector.

This was easy enough to fix, but then I discovered the mouse cursor was leaving trails, because Allwinner’s KMS driver was only repainting full-screen page flips and ignoring the small dirty rects a cursor move generates. The fix was to force Xorg into a software-shadow framebuffer path, which routes every screen update through a full-frame buffer copy to the KMS framebuffer, which is the only path the Allwinner KMS driver actually services. As a side effect, this disables glamor on the modesetting driver, so any GL client that goes through the X server (Firefox, for example) loses HW-accelerated buffer sharing and falls back to software compositing. Chromium routes around it through ANGLE-on-Vulkan, which talks to the DRM device directly and never asks X to composite GPU buffers. Firefox can’t do that.

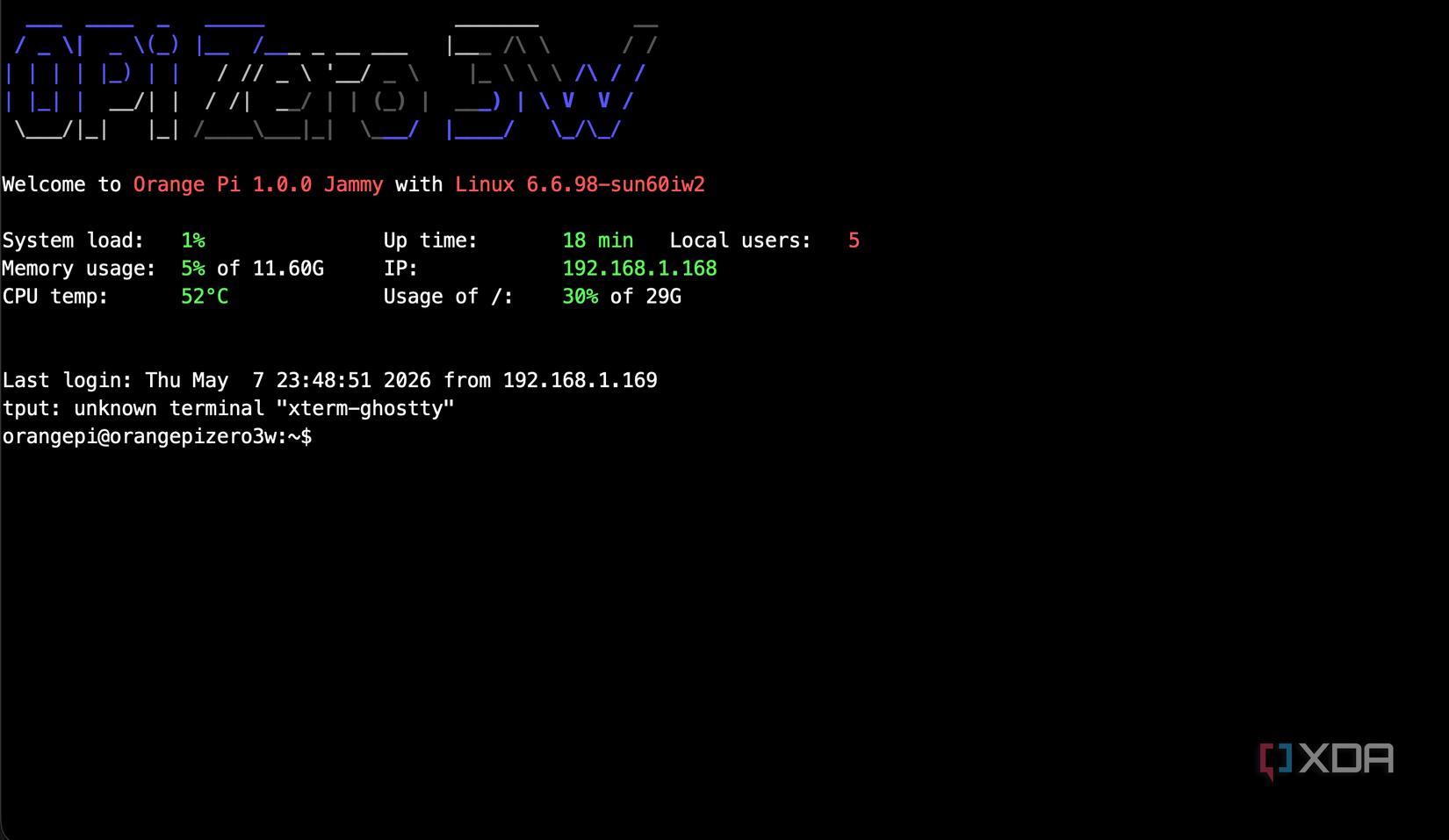

Still, I now have a pretty powerful SBC that actually works. With a single kernel module rebuild, two userspace transplants, and some config tweaks, the board boots into the same Orange Pi Ubuntu desktop you’d get from the official image, except now the silicon is doing what it’s advertised to do.

With the GPU and video engine running, the board can do a whole lot more. Chromium reports hardware acceleration for Canvas, compositing, rasterization, WebGL, WebGL 2, WebGPU, and video decode once launched through ANGLE-on-Vulkan, with chrome://gpu showing the PowerVR B-Series BXM-4-64 MC1 as the active Vulkan device. WebGPU samples that wouldn’t run at all on the stock image run perfectly, and the WebGL aquarium test actually runs. ANGLE-on-Vulkan is already the expected GL path on Windows, macOS, and Android, just not the default on Linux.

Beyond the browser, anything that can use the PowerVR EGL or Vulkan stack directly has a real acceleration path now. That includes 3D games using GLES or Vulkan, which go from unplayable on software rendering to actually playable. It includes ML inference frameworks like llama.cpp’s Vulkan backend, ncnn, and MNN, which can now use the GPU for compute on top of the CPU (though I had to build the newest Vulkan headers to get that working). It also includes ffmpeg’s scale_opencl and scale_vulkan filter chains, so GPU compute paths in video processing exist on this board.

The video engine adds the other half. In my GStreamer tests, the BSP stack exposed hardware decode paths for H.264, H.265, VP9, VP8, MPEG, MJPEG, AVS, and AVS2, though the public A733/Radxa material mainly advertises H.264, H.265, VP9, and AVS2. GStreamer is the path used by tools like gst-play and Totem alongside other players built with GStreamer support. So if you wanted a tiny living-room media player the size of a Pi Zero that decodes 1080p videos while consuming basically no CPU, on a board cheaper than a Pi 5, you can build one. In testing, a Big Buck Bunny clip (10 seconds, 60fps, High profile) at 1080p encoded in H.264 decoded at around 208 fps with the CPU essentially idle. Anything that uses ffmpeg still won’t work, though, as there’s no VAAPI/V4L2-M2M back-end.

The NPU is the other thing the Pi 5 just doesn’t have. However, there’s a weird oversight from Orange Pi here that kind of lines up with everything else I’ve found throughout this project. There’s a yolov5 demo at /opt/yolov5/yolov5, but you can’t actually run it. The reason is pretty funny: it was built against libopencv_imgcodecs.so.4.5, when the base image ships with libopencv_imgcodecs.so.4.5d. The demo can’t find the library and fails to launch. By creating symlinks for the three separate libraries, I got it running, but it’s a bizarre oversight in the first place. Once running, the bundled YOLOv5 demo clocks in at 429 milliseconds on the AIPU. That’s not enough for smooth video inference on its own, but it’s plenty for motion-triggered detection on a doorbell camera, or any case where you want on-device ML without offloading to the cloud or a USB Coral.

Pairing the GPU with the VPU and the NPU gives you the backend for things like a lightweight AirPlay receiver, hardware-decoded 1080p playback, basic Vulkan games, and local ML demos. And all of that is happening on an SBC that starts at $25.

Unfortunately, it’s not all ready to go just yet. mpv falls back to software because it only knows about hardware video acceleration through ffmpeg’s libavcodec hwaccel APIs, and none of those have a backend for Allwinner’s BSP video stack. Allwinner never wrote a VAAPI driver, the upstream V4L2 cedrus driver doesn’t know about the A733 yet, and ffmpeg’s h264_omx encoder doesn’t help either. The only OMX IL Core in the BSP is Allwinner’s libOmxCore.so, while h264_omx is hardcoded against libomxil-bellagio, so it can’t even load the components GStreamer is happily using.

The OMX path that does work sits behind GStreamer’s gst-omx plugin, which talks to libOmxCore.so directly via gstomx.conf. This means GStreamer-native tools (Totem, gst-play, anything built on GStreamer) get hardware decode, but ffmpeg-based players don’t, and that includes the likes of Jellyfin. Firefox WebGL is software-rendered for the same shadow framebuffer reason discussed earlier, which isn’t helped by Firefox not shipping ANGLE on Linux to route around it. Chromium does, which is why Chromium works. The same VAAPI issue applies there too, though, so Chromium video decode doesn’t work, and it’s the same story on Radxa’s board.

The Raspberry Pi 5 still wins on the things it’s always won on: a polished image where everything works the day you flash it, near-perfect documentation, and a community big enough that whatever you want to do has been done before by somebody. That said, the hardware the Orange Pi Zero 3W packs is, at this point, just better. More cores, faster RAM, an NPU, hardware video coverage on more codecs, and all of it in a smaller form factor at a lower price. The only thing standing between that hardware and the user, on the stock image, is a userspace stack the OEM doesn’t ship. Once you put the userspace back, this board is in a different conversation than its size and price tag would suggest.

If you come across another board with the same problem, the methods I used here are likely to still apply. It’s a strange thing to have to fix yourself, but the fix exists, the build script is reproducible, and the result is a tiny SBC that does what every spec on the box says it does. That’s about all I really wanted from it.

Why it matters

News like this often changes audience expectations and competitors’ plans.

When one player makes a move, others usually react — it is worth reading the event in context.

What to look out for next

The full picture will become clear in time, but the headline already shows the dynamics of the industry.

Further statements and user reactions will add to the story.