The topic of Apple trained an AI that captions images better than models ten times its size is currently the subject of lively debate — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

Apple researchers have developed a new way to train AI models for image captioning that delivers more accurate, detailed descriptions while using far smaller models. Here are the details.

In a new study titled RubiCap: Rubric-Guided Reinforcement Learning for Dense Image Captioning, a team of Apple Researchers collaborated with the University of Wisconsin—Madison to develop a new framework for a dense image captioning model, yielding state-of-the-art results across multiple benchmarks.

Dense image captioning is the task of generating detailed, region-level descriptions of everything happening within an image, rather than a single overall summary.

In other words, it identifies multiple elements and regions in an image, and describes them with fine-grain detail, resulting in a much richer understanding of the scene than an overall description.

Here’s are a few examples from Stanford’s original dense captioning paper, DenseCap: Fully Convolutional Localization Networks for Dense Captioning:

Dense image captioning can be used for a variety of tasks, such as training vision-language and text-to-image models. When applied to user-facing features, it can improve image search and even accessibility tools.

The problem, according to the data the researchers, is that current AI-based approaches to training dense image captioning models tend to fall short in significant ways:

Dense image captioning is critical for cross-modal alignment in vision-language pretraining and text-to-image generation, but scaling expert-quality annotations is prohibitively expensive. While synthetic captioning via strong vision-language models (VLMs) is a practical alternative, supervised distillation often yields limited output diversity and weak generalization. Reinforcement learning (RL) could overcome these limitations, but its successes have so far been concentrated in verifiable domains that rely on deterministic checkers—a luxury not available in open-ended captioning.

With that in mind, they proposed a new framework to tackle these limitations, which took an interesting approach.

They randomly sampled 50,000 images from two training datasets, PixMoCap and DenseFusion-4V-100K.

For each image, the system generated several caption options using a set of existing vision language models, including Gemini 2.5 Pro, GPT-5, Qwen2.5-VL-72B-Instruct, Gemma-3-27B-IT, and Qwen3-VL-30B-A3B-Instruct.

At the same time, the model being trained under RubiCap produced its own caption for that image.

After that, Qwen2.5-7B-Instruct served as the judge, scoring the captions against each criterion to produce the reward signal used for training.

As a result, the model received more precise, structured feedback on what to fix, leading to more accurate captions without relying on a single “correct” answer.

When all was said and done, the researchers produced three models: RubiCap-2B, RubiCap-3B, and RubiCap-7B, with 2 billion, 3 billion, and 7 billion parameters, respectively.

And compared with current approaches, they did surprisingly well, outperforming models with up to 72 billion parameters.

Across extensive benchmarks, RubiCap achieves the highest win rates on CapArena, outperforming supervised distillation, prior RL methods, human-expert annotations, and GPT-4V-augmented outputs. On CaptionQA, it demonstrates superior word efficiency: our 7B model matches Qwen2.5-VL-32B-Instruct, and our 3B model surpasses its 7B counterpart. Remarkably, using the compact RubiCap-3B as a captioner produces stronger pretrained VLMs than those trained on captions from proprietary models.

In a blind ranking evaluation, RubiCap-7B earns the highest proportion of rank-1 assignments among all models—including 72B and 32B frontiers—achieving the lowest hallucination penalty and strongest accuracy.

In case you missed that, the researchers noted that the smaller, 3-billion-parameter model outperformed its larger counterpart on certain benchmarks, suggesting that a strong, dense image captioning model doesn’t necessarily require massive scale to deliver high-quality results.

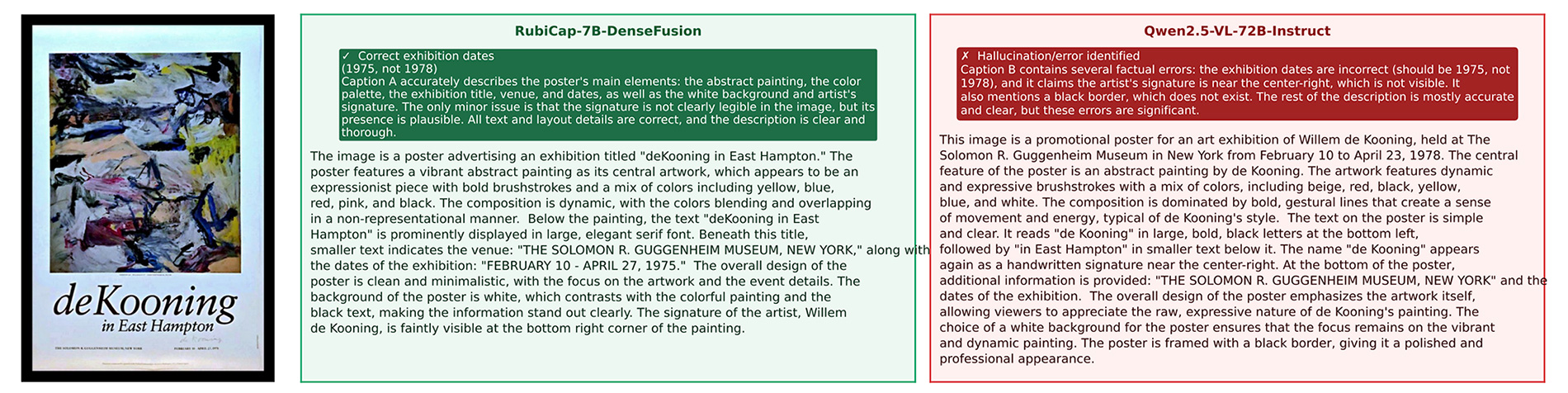

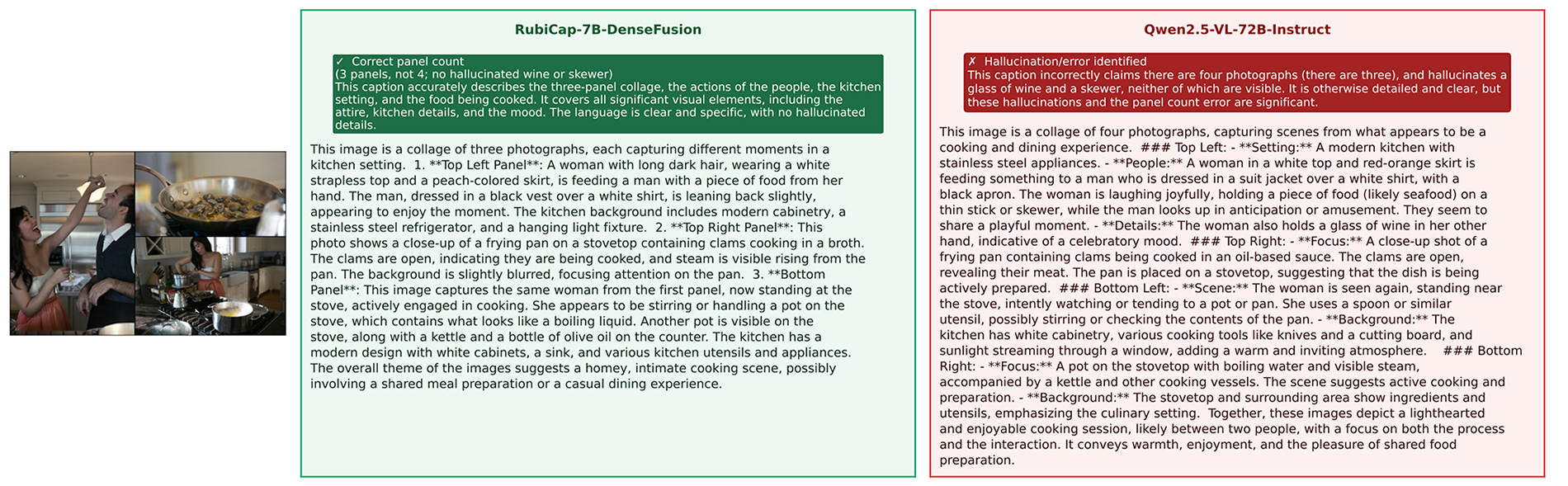

Here are some caption comparisons between RubiCap-7B-DenseFusion and Qwen2.5-VL-7B-Instruct:

To learn more about the study, including an in-depth look at its technical terms, follow this link.

Why it matters

News like this often changes audience expectations and competitors’ plans.

When one player makes a move, others usually react — it is worth reading the event in context.

What to look out for next

The full picture will become clear in time, but the headline already shows the dynamics of the industry.

Further statements and user reactions will add to the story.