The topic of OpenAI releases GPT-5.5, and you can try it right now is currently the subject of lively debate — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

As the LLM competition begins to heat up, we’re seeing AI tech companies burn through version numbers like they were made of wood. Any signs of slacking or lagging behind may permanently set the company back behind its peers, so everyone is doing their best to release new models all the time.

OpenAI is no exception, and now, the company has just released GPT-5.5. And if you have a strange feeling that GPT-5.4 surely can’t be that old, well, you’re right; but that hasn’t stopped the company from making a new one anyway.

Over on X, OpenAI has revealed that GPT-5.5 is now available for general use. It follows hot on the heels of GPT-5.4, which was only just released in early March. It’s a similar pattern we’re seeing with Anthropic, where a model will barely see its two-month anniversary before a successor steps in.

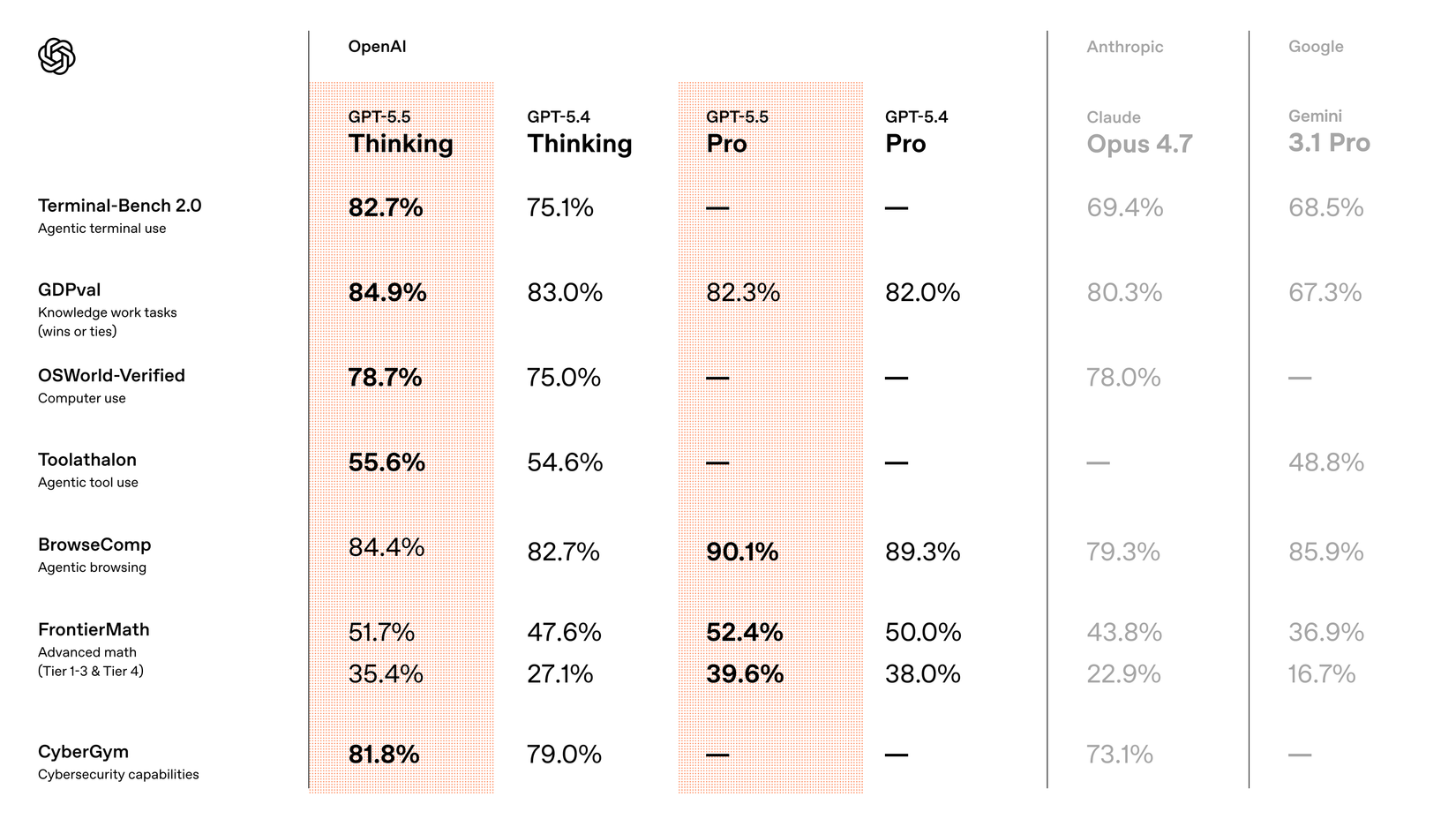

So, just how much can OpenAI do in under two months? Well, in a reply to the original post, the company shared some benchmarks versus its older model, and you can see the results in the image above. The biggest jump was with using Thinking with Terminal-Bench 2.0, with GPT-5.5 taking over 7 percentage points over the previous model. OpenAI also adds some competitor benchmarks into the chart, showing that the company has reason to believe that ChatGPT is either comparable to or beating the opposition.

Why it matters

News like this often changes audience expectations and competitors’ plans.

When one player makes a move, others usually react — it is worth reading the event in context.

What to look out for next

The full picture will become clear in time, but the headline already shows the dynamics of the industry.

Further statements and user reactions will add to the story.