The topic of React to Rust, no try/catch is currently the subject of lively debate — readers and analysts are keeping a close eye on developments.

This is taking place in a dynamic environment: companies’ decisions and competitors’ reactions can quickly change the picture.

I built three libraries independently. When I put them in the same project, the boundaries between them disappeared. This is what was left.

The path: a click in a React form, through schema validation, into a Rust solver running in WebAssembly, back through a typed Result, and out to a rendered porkchop heatmap — without using try/catch for control flow anywhere.

They share an idiom by design: discriminated outcomes, no exceptions for control flow. The interesting question is what happens when you put them in the same project. The short answer: the seams disappear.

The demo lives at stackblitz.com/edit/vitejs-vite-9hmwtvdt. Go run it before reading the rest of this — it’s small, and the source is the argument.

A note on audience: if you’ve used Result/Option idioms before — Rust, fp-ts, neverthrow — the code samples will read naturally. If you haven’t, the first few may feel like jargon. The porkchop section earns the syntax; stick with it.

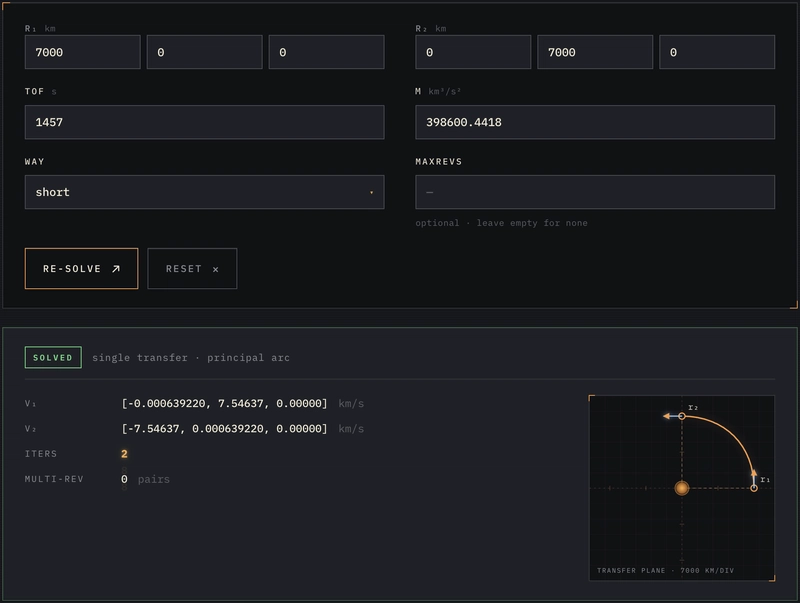

Two position vectors r₁ and r₂. A time of flight tof. A gravitational parameter μ. Find the velocities v₁ and v₂ that connect them under Newtonian gravity. That’s a Lambert two-point boundary-value problem, and Dario Izzo published a fast, robust algorithm for it in 2014.

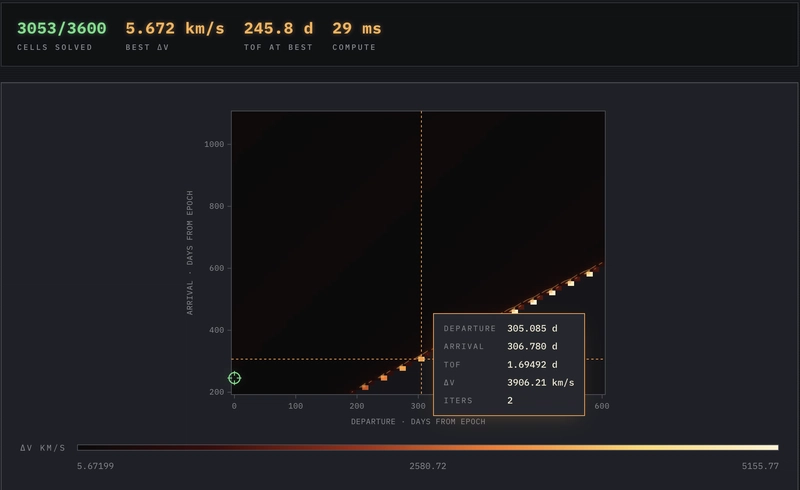

You’d reach for it any time you need to design a transfer orbit: an Earth-to-Mars launch window, a satellite rendezvous, a debris-removal mission. It’s the workhorse behind a porkchop plot — a heatmap over (departure date × arrival date) showing total Δv for every transfer in a window. The cheapest cell is the launch window.

The demo actually does this. From the form input that you type into, all the way down to the Rust crate that solves the BVP, the same shape is preserved.

Most TypeScript codebases that talk to WASM reintroduce exceptions at the FFI boundary — the wrapper catches a JS Error, decides whether it was a panic or a domain failure, and projects something into the app. This is where the railway typically dies. lambert-izzo doesn’t have that boundary.

The Rust crate uses Result end-to-end, and the solver does not panic on bad input. Bad geometry, non-positive TOF, non-finite floats, out-of-range revolution counts — all of those return a typed LambertError variant. There is no panic! boundary to wrap because there are no panics on modeled input. The TypeScript wrapper’s only job is to project the Rust sum type into a JS tagged union without flattening either branch.

The crate ships two ways: as a pure-Rust crate on crates.io (no_std-friendly, [f64; 3] API, no hard math dep), and as an npm package via wasm-pack under the name lambert-izzo. The WASM payload is small enough for ordinary browser delivery.

result.error.kind is a discriminant: CollinearGeometry, DegeneratePositionVector, NonPositiveTimeOfFlight, etc. Even input validation (maxRevs > 32) returns through the same channel — RevsOutOfRange lands as kind: “err” with structured fields, not as a thrown error.

The deeper point: Rust’s type system forces the discipline that TypeScript’s culture only suggests. Once the Rust side is right, every TS consumer downstream inherits the property for free. The wrapper is two lines of projection logic. The wrapper can be two lines because the producer was correct.

The demo uses three fields from the success response: response.single.v1, response.single.v2, and response.diagnostics.single.iters. For multi-rev solutions there’s also response.multi[]. Everything is [number, number, number] for vectors — the same shape as the input. No BigInt, no proxies, no surprises.

@railway-ts/pipelines is four independent submodules that can be imported on their own. The demo touches all four.

Result. ok and err constructors. Verbs that operate on the railway without poking at the tag: mapWith (transform the success branch), mapErrWith (transform the error branch), match (pattern-match), partition (split a Result[] into successes and failures in one pass). Failure with a reason.

Option. some and none. mapToOption lifts a Result to an Option (errors become none). match over { some, none }. Plain absence — when the question is “do I have a value?”, not “did something go wrong?”.

Schema. object, required, optional, chain, parseNumber, tupleOf, stringEnum, refineAt, and friends. Parses unknown input into typed values. Accumulates all errors in one pass by default. Standard Schema v1 compliant — Zod, Valibot, ArkType all interop, but pipelines is the native dialect.

Composition. pipe for immediate application, flow for point-free reusable pipelines, curry/uncurry for shape-shifting. pipeAsync/flowAsync for the same model when steps return promises.

Many TypeScript projects pay for these capabilities three times — once for a validator, once for a Result library, once for hand-rolled async wiring between them. Here it’s one model.

useForm takes a schema and an initial-values object. It gives back a form handle whose getFieldProps(name) is spread directly onto a native <input>. There’s no <Controller> component, no register(“name”), no resolver factory. The schema is the source of truth for types, validation, field paths, and error placement.

handleSubmit returns a Result. There is no try/catch wrapper around onSubmit; if validation fails, the failure is a typed return value. Three error layers (client, per-field async, server) compose with deterministic priority. Cross-field validation via refineAt (covered next). Standard Schema v1 means Zod / Valibot / ArkType also work — the demo uses pipelines natively because the cross-field rules are cleaner there.

The interesting move is the absence of a layer. There is no separate “form types” file, no resolver adapter, no useFieldRegister, no schema-to-error translation. The schema is the type. The schema is the validator. The schema is the field path source. Three jobs, one declaration.

The single-solve view rendered from the schema above. Every field in lambertRequestSchema has a one-to-one render — the vec3 tuples become three inputs, stringEnum becomes a select, emptyAsOptional becomes “optional · leave empty for none.” The green SOLVED badge is the success branch of the Result; below it is the typed LambertResponse (V₁, V₂, iter count, multi-rev pairs). The transfer-plane sketch in the corner plots r₁, r₂, and the connecting arc.

The porkchop schema (src/lib/schema.ts:38) does the same on the date windows: a triple refineAt chain enforces departureMax > departureMin, arrivalMax > arrivalMin, and the overlap rule between the two windows. Three rules, three error placements, no handler code.

InferSchemaType<typeof lambertRequestSchema> is the type. Nothing else needs to know.

This is the bridge from the form to the WASM crate. The whole file is 41 lines (src/lib/solver.ts):

The fromOutcome adapter is two lines: a single ternary that re-tags the discriminated union. No try/catch. Not because we’re being clever — because the domain API does not report modeled failures by throwing. The Rust side already handed us a typed sum. A try/catch around solveLambert would be an exception handler with no producer.

solve = flow(toRequest, callSolver) is point-free composition. Two named stages. The form gives you a LambertRequestValues; flow runs it through toRequest (the seam where any future form/WASM-bridge divergence would land) then through callSolver, and you get a SolveResult. No glue code, no intermediate variables, no ifs.

solveBatch is .map(fromOutcome) over the batch result. The in-file comment explains why it doesn’t go through flow: it’s a single transformation, and ceremony doesn’t pay for itself here.

A note on the type. SolveResult = Result<LambertResponse, LambertErrorOutput>. The success branch carries the full WASM response (positions, velocities, multi-rev solutions, diagnostics). The error branch carries the structured LambertErrorOutput directly from the WASM crate. The .kind discriminant is preserved end-to-end. If the user typed a TOF of zero, the form rejects it before the schema even hits the solver; if the user types a tiny but legal TOF that produces a RevsOutOfRange, that lands as a LambertErrorOutput on the error branch, and the kind field is what the renderer uses.

3,600 Lambert solves in 29 ms — the entire grid recomputes faster than a single React render. The bright diagonal band is the launch window; hovering any cell surfaces the underlying CellOk payload (Δv, TOF, solver iterations).

The porkchop sweep is where the demo gets interesting, because it forces the Result-vs-Option distinction.

A porkchop run computes one Lambert solve for every cell in a (departure date × arrival date) grid. The default grid is 60×60 = 3,600 cells. Some cells succeed; some fail because of bad geometry, non-positive TOF (arrival before departure — non-physical), or solver-internal reasons.

Now, what’s the type of “the best cell across the whole grid”? If every cell errored, there is nothing to report. That’s not a failure with a reason — there’s no value range to compute extrema over. It’s just absence. src/lib/porkchop.ts:61:

This is the rule the design keeps coming back to: model failure-with-reason as Result<T, E>; model plain absence as Option<T>. Don’t flatten one into the other. The types tell you, six months later, what the empty case actually means.

The per-cell pipeline is three verbs from the pipelines library (src/lib/porkchop.ts:154):

SolveResult is Result<LambertResponse, LambertErrorOutput> from the solver. mapWith rewrites the success branch into a CellOk (which carries the Δv we care about plus the trajectory data). mapErrWith rewrites the error branch into a CellReason (which is LambertErrorOutput[“kind”] | “non-physical”). The output type is Result<CellOk, CellReason> — exactly Cell. We never asked “ok or err?”; the pipelines verbs operate on both branches simultaneously without poking at the tag.

mapToOption(cell) is the lift: it turns each Cell (a Result) into an Option<CellOk>. Error cells become none() and drop out of the fold. The accumulator is Option<Extrema> — none() while we haven’t seen a successful cell, some(extrema) once we have. The verb is in the library; user code is the recipe.

Three files, one mental model. The same Result that came out of the WASM crate is still the same Result here, with a transformed payload, ready for the renderer.

The whole point of carrying the railway is that you only get off at the destination. In the demo, “destination” means “we’re about to draw pixels.”

One if, at the screen. Below this line, result.value is typed as LambertResponse and TypeScript knows it.

partition splits a Result[] into successes: T[] and failures: E[] in one pass. The user code never iterates the array branching on result.ok. The library has the verb.

partition again on the success count. matchOpt for the best cell — none becomes null only at the React boundary, where null is the natural “nothing to render” value. Inside the data layer, it stays Option.

This is the contract paying off. The if (!result.ok) lives in ResultView, not in a hook, not in the bridge, not in the schema, not in the solver, not in the porkchop math. The renderer is the only piece that is allowed to ask the question.

Of that JS, the railway-ts libraries together account for ~8 kB brotli (per the published READMEs: pipelines ~4.8 kB, use-form ~3.6 kB). The rest is React 19, react-router, and the demo’s own code. The WASM blob is 97 kB — about the size of a stock photo — and ships the entire Lambert solver, including the Izzo pipeline, the geometry stage, and structured error types.

The seams between layers can be removed; when they are, the resulting code is shorter than what it replaced; and a typed Rust solver running in your browser doesn’t have to be the scary part of the codebase.

Are you sure you want to hide this comment? It will become hidden in your post, but will still be visible via the comment’s permalink.

For further actions, you may consider blocking this person and/or reporting abuse

DEV Community — A space to discuss and keep up software development and manage your software career

Built on Forem — the open source software that powers DEV and other inclusive communities.

Why it matters

News like this often changes audience expectations and competitors’ plans.

When one player makes a move, others usually react — it is worth reading the event in context.

What to look out for next

The full picture will become clear in time, but the headline already shows the dynamics of the industry.

Further statements and user reactions will add to the story.